Anthropic introduced a system to review code written by artificial intelligence

As programming tasks with the help of artificial intelligence continue to develop, new and serious challenges are emerging for specialists. Zamin.uz reported on this.

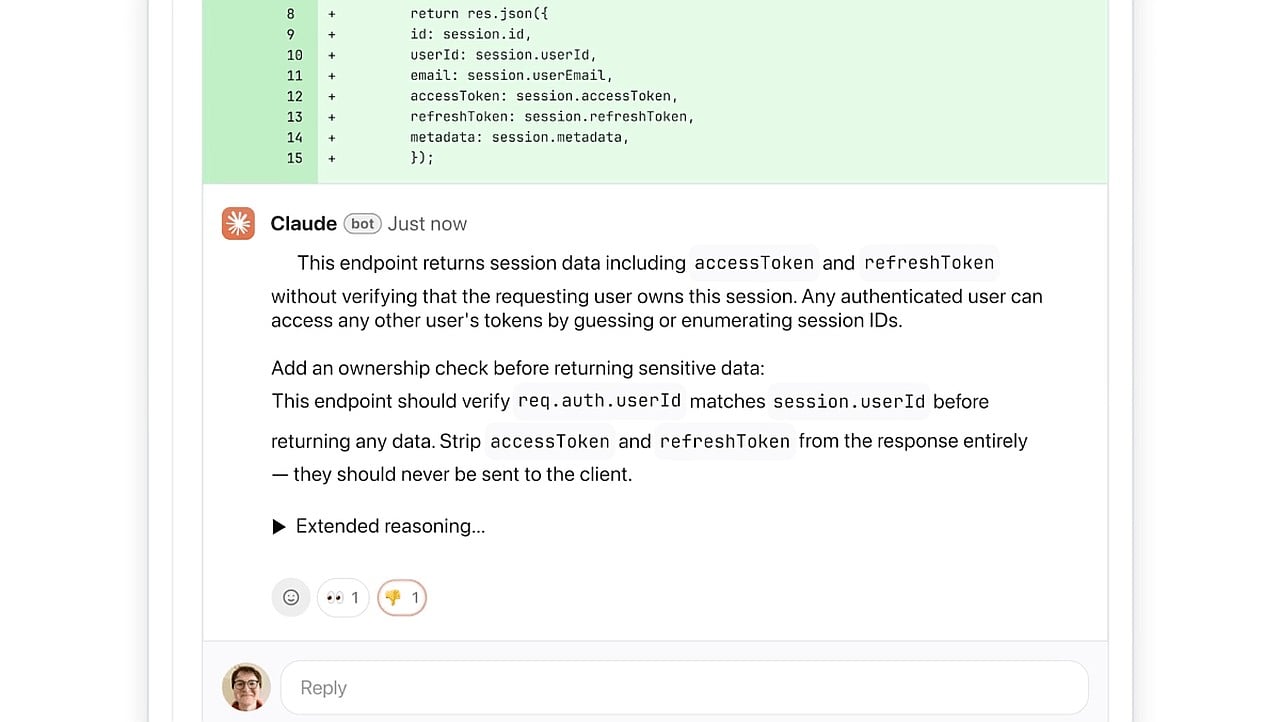

Specifically, the number of logical errors and gaps in protection systems within program texts written by artificial intelligence is increasing. For this very reason, the company Anthropic has added a special code checking function to its Claude Code system.

This new tool allows large organizations and corporations to automatically analyze code written by artificial intelligence and identify errors before resolving them. The new software system is fully integrated with the popular GitHub platform, conducting an in-depth study of the code primarily during the implementation process.

The system pays special attention not only to minor shortcomings in writing style but also to logical errors that directly affect program operation. This solution serves to increase programmer productivity and eliminate difficulties in controlling code quality.

The program marks identified issues with red, yellow, and purple colors. This clearly indicates the level of danger for each error and ensures its timely resolution.

As Ket Vu, Anthropic's Head of Product, emphasized, this solution is particularly important for major international companies such as Uber, Salesforce, and Accenture. The new system checks code from various angles with the help of several artificial agents and prioritizes the most serious issues.

Currently, this new function is being offered on a trial basis to users of Claude versions designed for teams and enterprises. In the future, this tool is expected to play a decisive role in improving quality and ensuring security in the field of programming.