Google's TurboQuant Algorithm Reduces Memory Consumption of Models by Six Times

Google company has introduced a new algorithm called TurboQuant. This was reported by Zamin.uz.

This algorithm is capable of reducing the memory consumption of large language models by up to six times. According to the company’s data, this method maintains accuracy and does not significantly harm the system’s performance.

As a result, it will be possible to make artificial intelligence systems cheaper and easier to deploy. This was reported by the Tech.onliner.by website.

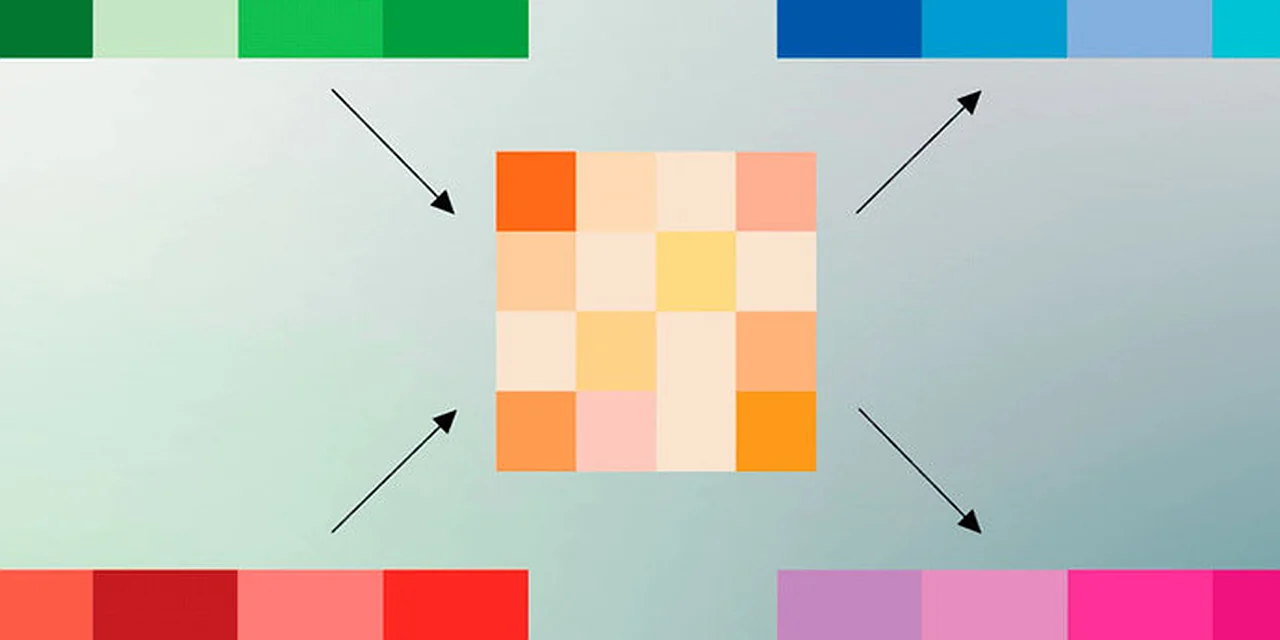

The main goal of the TurboQuant algorithm is to efficiently manage the cache memory used by language models during conversations. The cache stores necessary data to prevent repeating the same calculations in the system.

However, as the interaction with the user lengthens, the cache size also increases. This can slow down response speed and increase the demand for hardware resources.

According to Google, TurboQuant works in several stages by compressing stored data and correcting errors that occur during this process. This algorithm reduces memory pressure while also lowering computational costs.

Importantly, TurboQuant can be applied to existing models without additional preparation. This innovation will be especially useful for artificial intelligence tools running on smartphones and other devices with limited resources.

If TurboQuant is widely implemented, it will help reduce the operational costs of AI services. It will also enable efficient use of advanced models on smaller and less powerful devices.

This will create a foundation for broader application of artificial intelligence technologies.