Google introduced a new memory technology for artificial intelligence

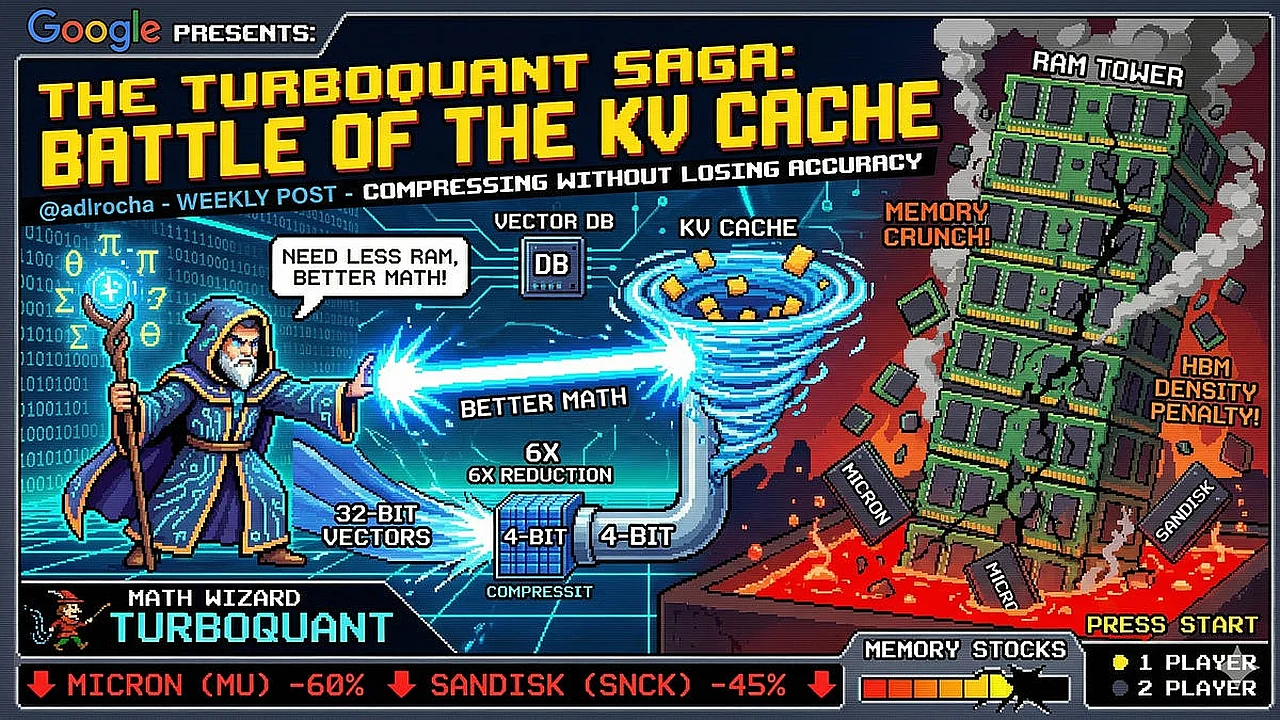

Google has introduced a new technology called TurboQuant. Zamin.uz reported on this.

This method aims to reduce memory requirements, one of the biggest hardware challenges for artificial intelligence systems. Traditionally, such systems rely on large and expensive chips, but the TurboQuant approach is based on reducing the amount of data that large language models need to store in memory during text generation.

This development could be significant for companies developing AI systems and investors in the memory chip market. In large language models, previous data is repeatedly accessed during the process of predicting each new word or token.

To achieve this, key and value data from earlier stages are stored in a special cache. This cache reduces redundant calculations, but its size increases with each new word added.

Especially in long conversations, coding processes, or document analysis, memory requirements can increase sharply. TurboQuant reduces memory demand by compressing the data within this cache.

According to available information, this method compresses stored vectors without significantly compromising model accuracy. Simply put, it attempts to preserve large memory capabilities while using less physical memory of the graphics processor.

This could increase computational efficiency and reduce pressure on high-speed memory supply. If methods like TurboQuant prove effective on a large scale, they could change the way we think about AI infrastructure.

High memory requirements may persist, but smart compression technologies are expected to slow down the demand for hardware. This would enhance the role of software in the industry, offering a way to solve part of problems previously thought to be solvable only through hardware.